Procurement · Equipment evaluation

7 Critical Questions: Complete Electronic Monitoring RFP Template for 2026 Procurement

April 16, 2026 · EM program operations & GPS ankle monitor procurement

If you are a county IT director, a statewide electronic monitoring program manager, or a private EM vendor preparing a government submittal, the same failure mode appears every cycle: the electronic monitoring RFP template asks for brand names, monthly rates, and warranty months—but not for the architecture that drives field workload. That omission is expensive. It is also why this guide reframes GPS ankle monitor procurement around seven evidence-based questions that map directly to officer hours, court credibility, and lifecycle risk.

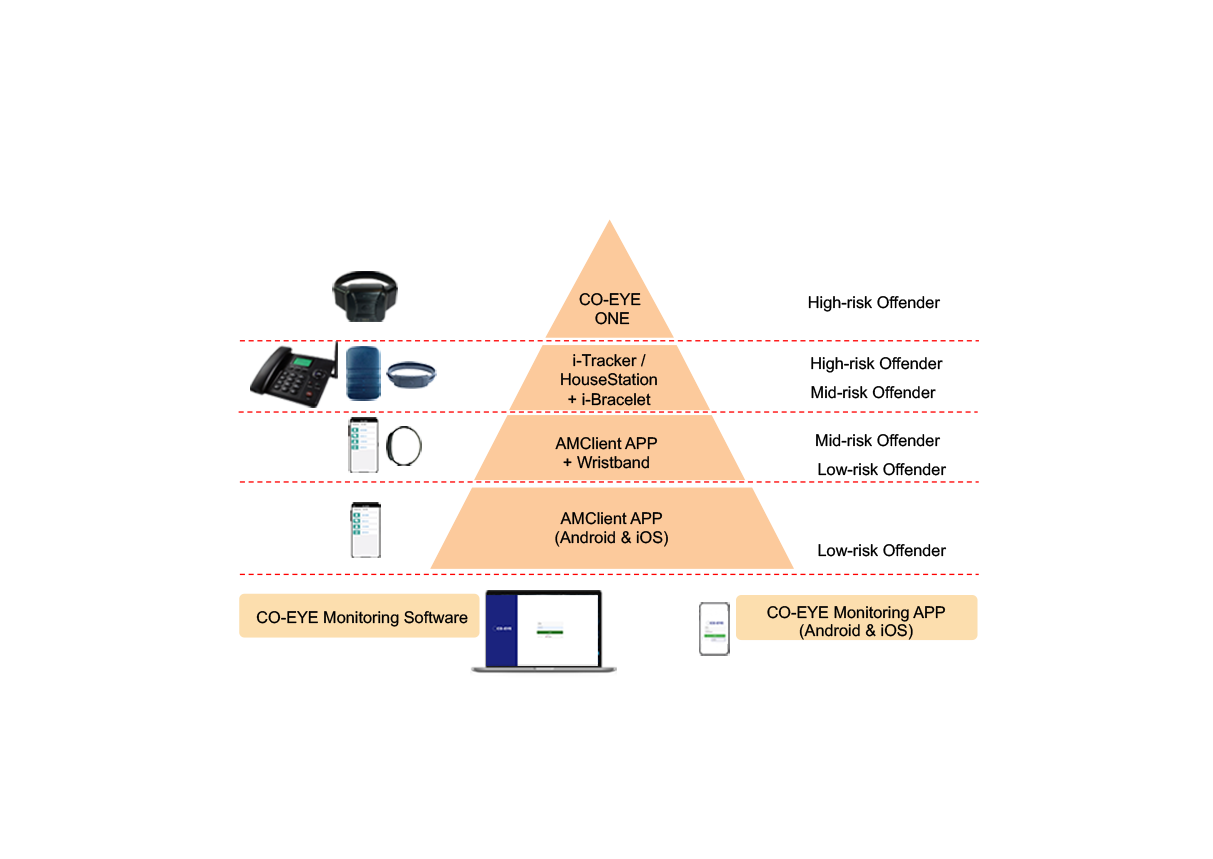

This article delivers a practical electronic monitoring vendor RFP scoring approach for EM equipment RFP 2026 cycles, including a copy-ready HTML table you can paste into Word or Google Docs. It is written from the RTLS Command Network operations perspective: we care about dashboards, alert fatigue, and fleet sustainment—not brochure claims. When you need manufacturer specifications and product references after the scoring pass, use REFINE Technology’s public pages for the CO-EYE ONE GPS ankle monitor and the broader CO-EYE product lineup as a benchmark for what “full evidence” looks like.

Why most ankle monitor bid evaluation rubrics under-weight operations

Traditional scoring sheets reward lowest monthly service fees. Fees matter, but they rarely dominate total cost of ownership (TCO) once you add: (1) officer and supervisor time spent on low-battery and “lost signal” workflows; (2) field visits triggered by tamper false positives; (3) help-desk minutes for charging accessories; (4) two-piece system reconciliation when the ankle module and cellular companion drift apart; and (5) accelerated replacement when carriers retire legacy cellular bands. A serious ankle monitor bid evaluation therefore begins by forcing vendors to disclose how their hardware architecture creates or removes those costs—not just how they price seats on a server.

NIJ-sponsored practitioner literature has long highlighted location accuracy targets, but day-to-day program managers feel a different pain: alert volume and device availability. Your electronic monitoring RFP template should make false-alert behavior and power-state behavior first-class evaluation categories, at equal priority to GNSS accuracy on a datasheet.

How to use this EM equipment RFP 2026 scoring model

Assign each major criterion a weight that reflects your jurisdiction’s caseload. Urban programs with dense RF environments may weight connectivity resilience and dead-zone mitigation higher. Rural programs with long highway travel may weight assisted battery modes and standalone LTE endurance higher. Domestic-violence caseloads may weight rapid install and discreet mass higher than average. Regardless of weighting, keep vendor responses in a single matrix so protest reviewers can see comparable evidence.

Require attachments: lab or third-party test summaries, redacted field telemetry samples, written 3G sunset statements, and a TCO workbook with formulas—not marketing PDFs alone. Where a vendor cannot produce evidence, score conservatively. GPS ankle monitor procurement is a decade-long decision; missing evidence is a signal.

Procurement annexes your legal team will thank you for

Modern electronic monitoring vendor RFP packets rarely fail on price alone—they fail on ambiguous performance language that makes corrective action impossible after award. Add annexes that define: (1) minimum device firmware update cadence and emergency patch windows; (2) maximum allowable downtime for supervisory dashboards during planned maintenance; (3) data residency and encryption standards for location histories and officer notes; (4) API or batch export formats for court discovery and prosecutor portals; and (5) chain-of-custody procedures for device retrieval after tamper signals. Even when your primary angst is hardware, the monitoring platform is what your users live in twelve hours a day.

For corrections IT, explicitly require integration hooks your county already operates: SAML/OIDC for identity, syslog or CEF for SIEM ingestion, and role-based access that separates call-center staff from investigative supervisors. If a vendor proposes a “walled garden” with PDF-only exports, score that as operational debt—you will pay for CSV rebuilds and manual transcription in every major case.

Alert economics: a worked example you can paste into staff workshops

Consider a mid-size program supervising five hundred active GPS enrollees. If legacy LTE-only devices generate, hypothetically, forty low-battery or “communication loss” alerts per day that each consume fifteen minutes of combined call-center and field verification time at a blended forty-five dollars per fully loaded hour, the annualized labor burden is already in the mid six figures before you add fuel, overtime, or vehicle wear. That arithmetic is not theoretical—it is why connectivity and battery questions belong at the top of your electronic monitoring RFP template, not buried on page forty-seven of a vendor’s boilerplate response.

Now layer tamper false positives: even a handful per week per hundred devices can collapse trust with courts and defense counsel. When attorneys argue that a bracelet “cries wolf,” judges discount future telemetry. That reputational damage is absent from most TCO spreadsheets but dominates real program outcomes. This is the ethical reason tamper modality belongs in the same weighted block as connectivity—not as a footnote under “accessories.”

Reference checks that actually predict deployment pain

Ask peer agencies not only “Are you happy?” but: average time-to-enroll after court order, percentage of devices returned for manufacturer defect in the first ninety days, average monthly alerts per supervised person stratified by alert type, and whether the agency had to hire temporary staff during peak enrollment. Cross-check answers against the vendor’s proposed staffing model in their TCO workbook. Mismatches here predict change-order battles.

For ankle monitor bid evaluation specifically, request anonymized histograms of install duration from at least two reference sites with caseloads within fifty percent of yours. Small pilots can hide skill concentration; broad histograms reveal training scalability.

Seven decision-critical questions (the “architecture-aware” set)

The following seven questions translate directly into contract language. They are ordered so that connectivity and power architecture come first—because those choices constrain everything downstream, from charging policy to dead-zone alert rates.

1) Connectivity modes: multi-mode (BLE + WiFi + LTE) versus LTE-only

Ask whether the device can automatically shift among companion-assisted low-power links, WiFi-directed telemetry when an access point is provisioned, and full LTE/GNSS standalone when the supervised person is away from home or phone. LTE-only designs must burn the cellular modem whenever the device is expected to report, which is why so many fleets plateau in the 24–72 hour battery band despite incremental firmware tuning. Multi-mode designs can keep supervision continuous while shifting the power budget to the slowest adequate radio.

RFP must-have language: “Vendor shall describe all supported connectivity modes, automatic switching logic, and test evidence for mode transitions without manual user action.”

2) Battery life: standalone LTE versus assisted BLE/WiFi modes

Demand explicit run-times at stated reporting intervals for: (a) LTE standalone; (b) WiFi-directed reporting where applicable; and (c) BLE-connected offload to an approved companion (agency phone app, home beacon, or similar). Require that vendors publish the duty cycle assumptions behind each number. Programs evaluating next-generation hardware should treat assisted modes as operational features, not “demo modes,” because they change how often staff chase chargers.

As a benchmark example only—not a substitute for your own acceptance test—REFINE Technology publishes CO-EYE ONE-AC class figures in the range of roughly seven days LTE standalone (typical reporting cadence), on the order of three weeks in WiFi-directed configuration, and up to roughly six months when BLE-connected offload is available and correctly paired. Your worksheet should still require vendor numbers under your mandated intervals.

3) Tamper sensing modality and false-alert economics

Ask vendors to state the physical principle for strap and case tamper detection (fiber continuity, capacitive loop, resistive mesh, PPG/skin proximity, etc.). Then require false-positive and false-negative rates with study design summarized in non-marketing language. Programs should understand that analog skin-sensing approaches have repeatedly been associated with double-digit false tamper rates in operational discussions; fiber continuity is binary—light passes or it does not—which is why vendors implementing full fiber paths can credibly target zero false tamper alarms for strap/case events when specified correctly.

Add a question on post-depletion tamper evidence if your orders require continued evidentiary integrity after a dead battery event.

4) Mass, ergonomics, and compliance psychology

Heavier bracelets increase skin irritation complaints, footwear conflicts, and attempts to loosen straps—each of which generates non-criminal signals that look like risk in a dashboard. Ask for device mass in grams, strap options (including cut-resistant options for high-risk tiers), and IP rating with test house named. One-piece designs near 108 g (vendor-published class for CO-EYE ONE) illustrate what “lightest commercially positioned one-piece GPS ankle monitors” means in 2026 marketing—but your RFP should specify a maximum mass your clinicians will support, then score lower mass within the acceptable band.

5) Install and removal time, tools, and training burden

Field minutes are budget dollars. Ask for median install and removal times with video evidence or on-site demonstration as part of short-list requirements. Tool-less, snap-fit designs under a few seconds reduce training variance across officers and shrink enrollment backlog. If a vendor requires torque drivers, adhesive steps, or multi-part sequencing, capture those steps explicitly in your electronic monitoring vendor RFP attachment because they will recur thousands of times.

6) Cellular bands, 3G/2G sunset, eSIM, and “network agility”

Ask for supported air interfaces (for example, 5G-compatible LTE-M/NB-IoT where applicable), carrier certification posture, and a written migration plan for sunsetting legacy technologies. If your state mixes urban corridors with remote counties, eSIM or dual-SIM strategies can reduce truck rolls when carriers change preferred partners. CO-EYE ONE-AC positions eSIM plus nano SIM as a dual-management example you can cite when writing minimum capability text—then let all bidders prove parity.

7) Total cost of ownership beyond line-item lease

Force a workbook: hardware amortization, service fees, spare parts, average monthly alerts per supervised person by category, assumed minutes per alert, fully loaded labor rate, and charging logistics. Vendors that cannot co-model alerts with you are asking you to carry the integration risk alone. This is the capstone question that separates EM equipment RFP 2026 adults from brochure shopping.

Extend TCO to include swap stock: how many spare devices per hundred active units the vendor recommends, typical depot turnaround for water damage, and whether loaner pools are priced separately. Programs in four-season climates should model winter boot interference and summer sweat-related strap adjustments as support-ticket drivers—these micro-events show up as aggregate help-desk load, not as “device failures,” yet they consume the same FTE lines.

Contract clauses that operationalize the template

Translate your scoring table into enforceable service levels. Examples: credits if monthly average location-reporting uptime falls below a defined threshold; root-cause analysis timelines after any mass firmware regression; quarterly business reviews with alert taxonomy breakdowns; and the right to run independent penetration tests against the vendor’s API perimeter. The goal is not to be adversarial—it is to align incentives so vendor product roadmaps respect your supervision realities.

Finally, reserve the right to re-open technical evaluation if regulatory obligations change mid-contract (for example, new state standards for domestic-violence proximity monitoring). GPS ankle monitor procurement documents that freeze specifications for five years without a change mechanism become liabilities when court orders evolve faster than hardware refresh cycles.

Copy-ready electronic monitoring RFP template table (HTML)

The table below is intentionally wide so it mirrors a real procurement workbook. Copy the block into your CMS or doc system; adjust weights in the header row to match your program’s priorities.

| Criterion | Minimum acceptable (example language) | Vendor response summary | Evidence attached (Y/N) | Score 0–5 | Weighted points |

|---|---|---|---|---|---|

| Multi-mode connectivity | Must support automatic failover beyond LTE-only (describe BLE/WiFi/LTE stack). | × 20% | |||

| Battery: LTE / WiFi / assisted | Declare run-time at mandated reporting intervals for each mode; no hand-waving. | × 20% | |||

| Tamper modality & false alerts | Written false-positive/false-negative study; describe strap+case sensing. | × 15% | |||

| Mass & IP rating | Grams and IP68 (or justify lower) with lab certificate reference. | × 10% | |||

| Install/remove workflow | Median minutes & tools; training hours for new officers. | × 10% | |||

| Cellular + sunset + eSIM | Band list, carrier certs, 3G/2G exit plan, eSIM if required. | × 10% | |||

| TCO workbook | Alert model + labor + spares + swap-outs co-signed by vendor SE. | × 15% |

When you normalize scores, keep a “hard fail” gate: any vendor that cannot meet your minimum on tamper evidence or sunset planning should be dropped before subjective price negotiations. That protects public trust and reduces the odds of a mid-contract emergency re-bid.

Optional annex: pilot acceptance tests (paste into SOW)

Attach a thirty-device pilot with success thresholds: e.g., minimum percentage of scheduled reporting intervals successfully received, maximum tamper false positives per device per month, and side-by-side install-time observations against your incumbent fleet. Pilots cost money up front; they save multiples downstream when a bad architecture would have flooded your command center with avoidable noise.

Structure the pilot as an A/B cohort: half incumbent devices, half challenger devices, randomized within matched risk tiers (urban vs rural, high-charging-risk vs standard). Publish the randomization seed in procurement records so protest defenses can show unbiased assignment. Collect not only uptime but subjective officer workload using a simple five-point Likert after each shift—human factors dominate adoption even when dashboards look green.

Include a “stress week” inside the pilot window: simulated carrier maintenance windows, deliberate basement reporting tests where WiFi assistance is expected, and a supervised lab walk-through of strap adjustment scenarios. If your electronic monitoring RFP template never tests edge cases, vendors will optimize demos for ideal conditions only.

Governance: who owns the scorecard after award?

Assign a single accountable owner—typically a chief probation officer, EM program director, or CIO delegate—for quarterly rescoring against the original matrix. New firmware can improve or degrade battery behavior; carrier sunsets can invalidate prior band claims; reference sites can change ownership. Without governance, the elegant spreadsheet you built during EM equipment RFP 2026 selection becomes shelfware while operations quietly drift.

Publish internally a one-page “architecture doctrine” summarizing why multi-mode connectivity, tamper modality, and TCO transparency were weighted the way they were. When political leadership asks why you did not pick the cheapest monthly fee, the doctrine explains—in plain language—that supervision programs buy outcomes and officer time, not radios alone.

Closing recommendation

The best electronic monitoring RFP template is the one your attorneys, IT security team, and field supervisors can defend in a protest hearing. Anchor it to architecture, evidence, and TCO—not slogans. When you are ready to compare a next-generation multi-mode GPS ankle monitor against incumbents on neutral testbeds, start from the published CO-EYE specifications on ankle-monitor.com/coeye-one/ and the full portfolio context on ankle-monitor.com/products/, then demand parity proofs from every bidder.

Contact Sales

For procurement briefings, evaluation support, or structured demos aligned to this RFP scoring model, reach the REFINE Technology sales desk.